What if drivers could just talk to the map?

Redesigning real-time incident reporting from a three-tap form into a voice-first experience—so drivers could warn others without taking their eyes off the road.

A sea of stuff happening. Almost none of it reported.

Millions of trips happen daily across Southeast Asia. Drivers see flooded roads, sudden closures, surprise checkpoints—the kind of info that could save hundreds of people a detour.

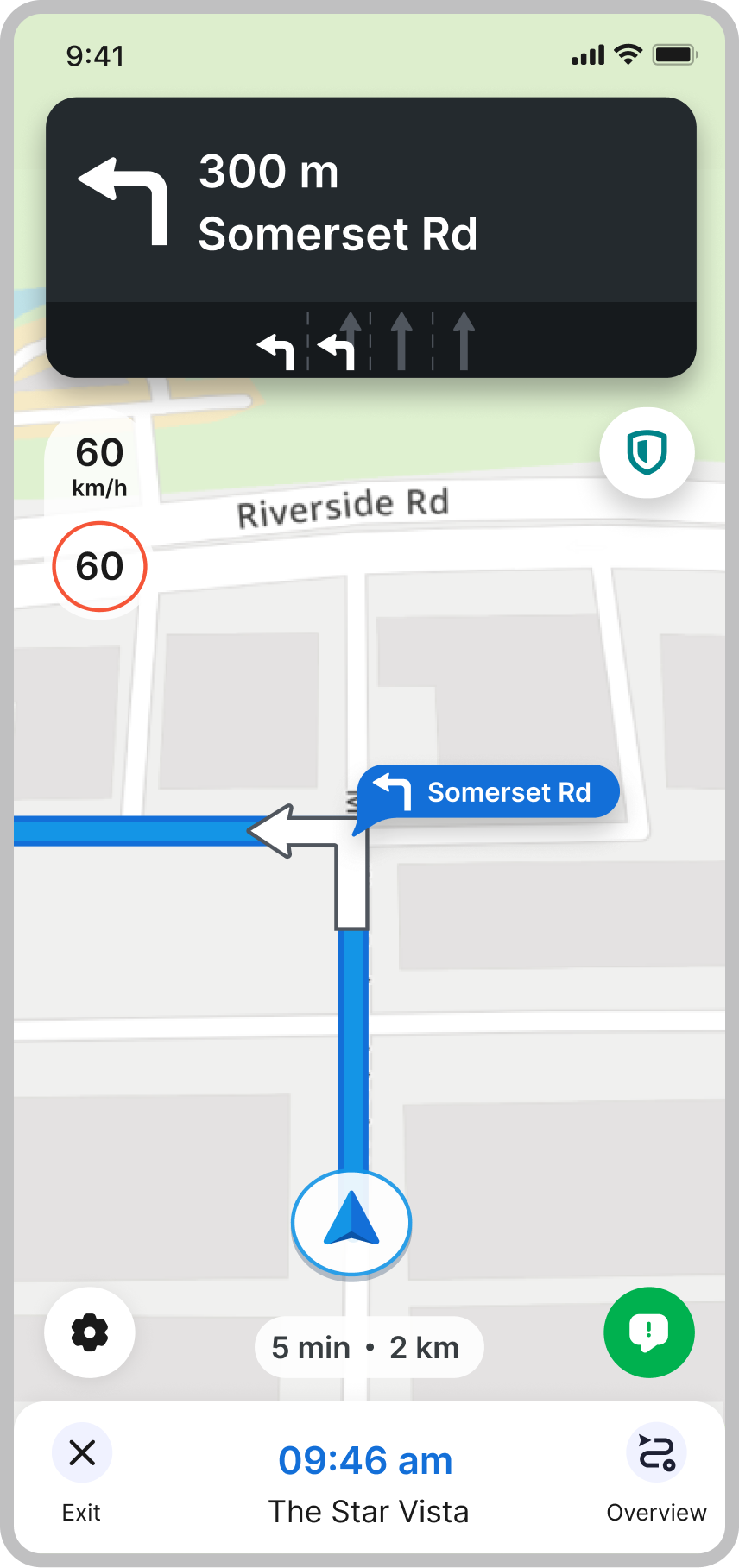

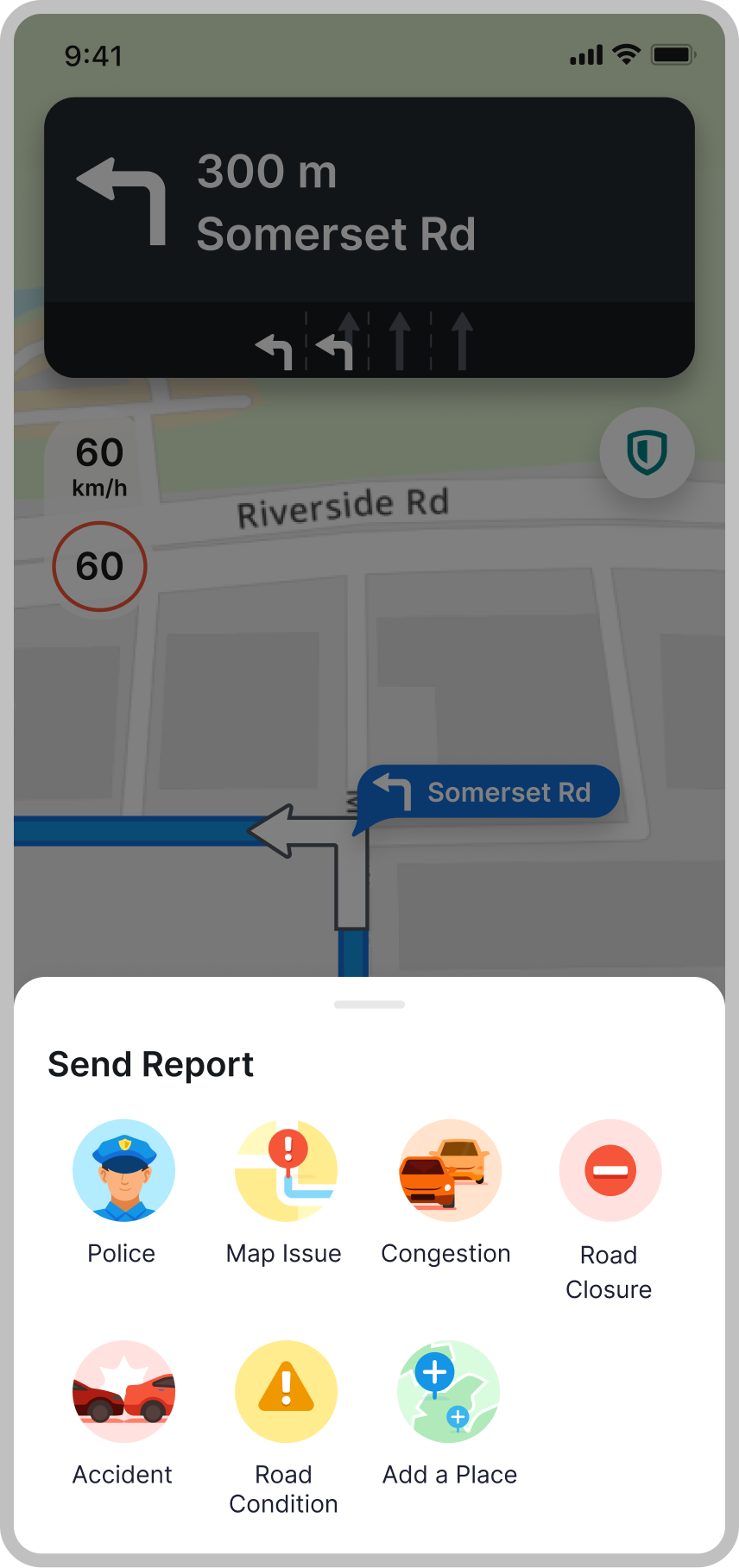

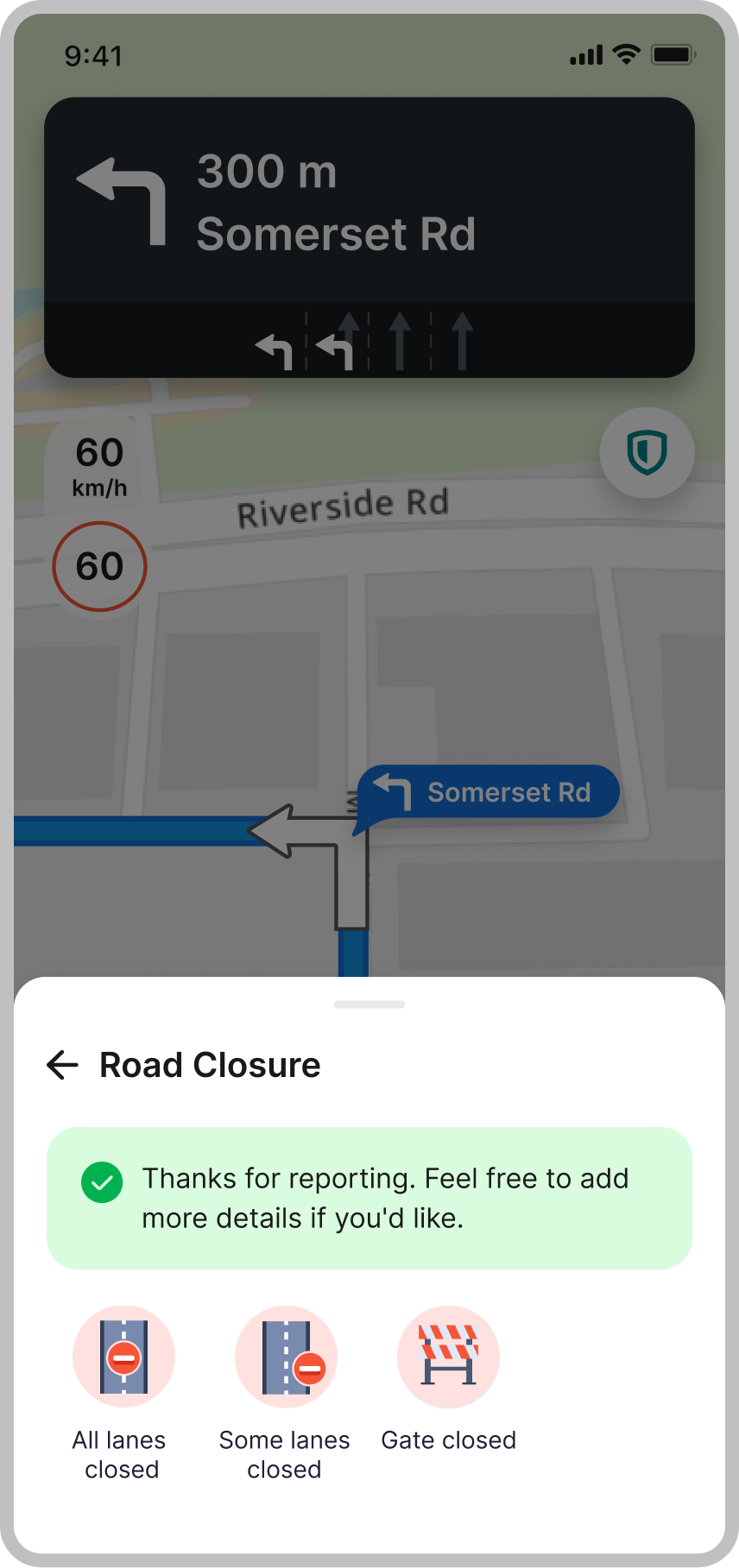

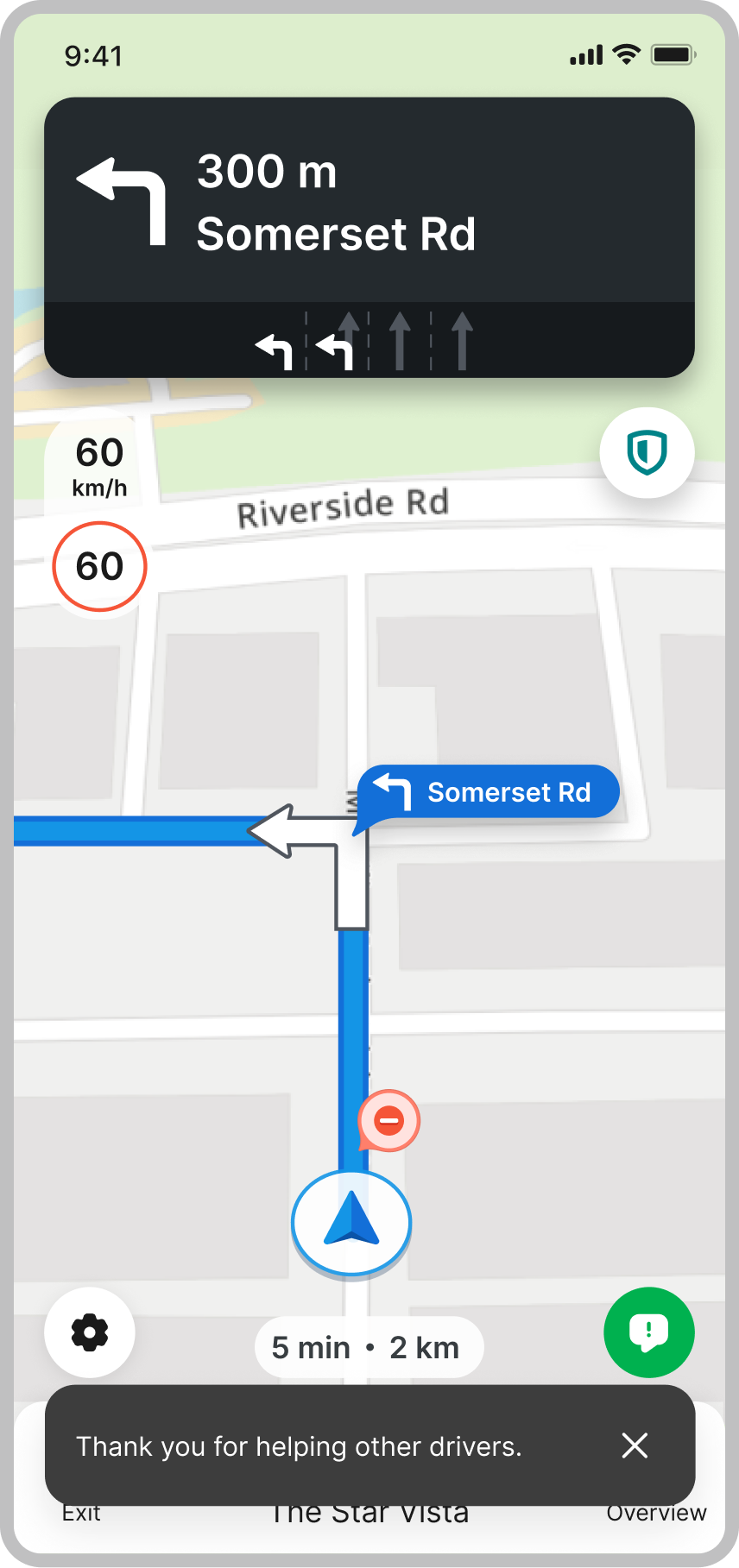

But the old reporting flow asked for this, at 60 km/h:

Most people just… didn't bother.

Same app. Very different worlds.

All drivers look the same in a database. On the ground, it's a different story—and designing one voice experience for all of them was the first trap to avoid.

The 4-wheel drivers

Enclosed cabin. Quieter environment. Comfortable with voice—likes looking more "professional" with hands-free tech.

The 2-wheel bike riders

Phone on handlebar. Wind and traffic noise. In Vietnam, Bluetooth earpieces aren't even legal while riding.

Across both groups, one thing was consistent: drivers genuinely want to help each other. In Jakarta, drivers would go out of their way to warn others about flooding—they just needed us to make it worth their effort.

We broke things, watched them fail, adjusted, repeated.

The hackathon prototype proved drivers loved the idea of "just talk what you see." The execution took a few rounds.

OS-level speech + snackbar confirmation

Hackathon prototype. Fast to build, but the install barrier killed it before drivers ever heard a word.

Redesigned confirmation sheet + integrated transcription + scripted follow-up

Pulled transcription into the app and replaced the snackbar with a large glanceable sheet—but scripted follow-ups hit a ceiling.

Real-time AI conversation + smart follow-ups

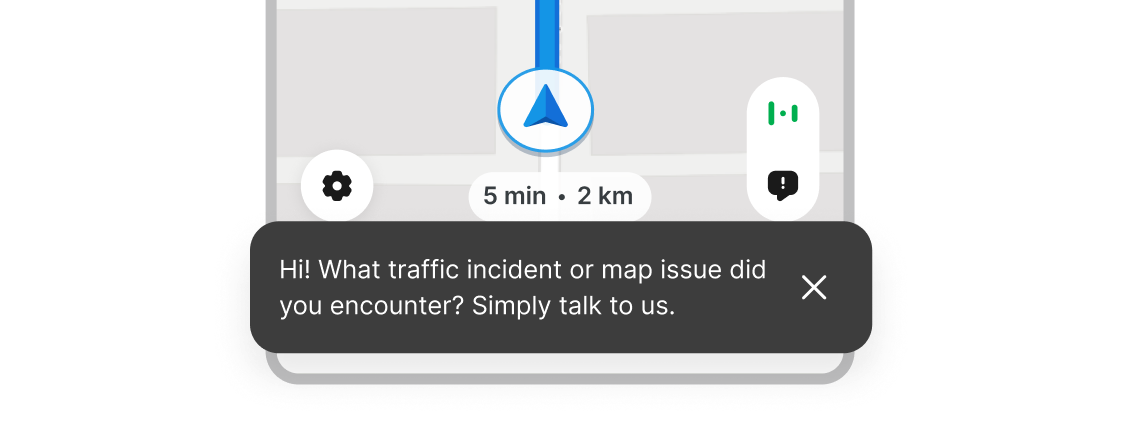

The AI listens to what the driver said and responds in kind. No scripts. No menus.

Every "yes" had a "but."

- — Voice is not universally better. It's contextual. With a passenger, many drivers go quiet. In Vietnam, motorbike riders can't legally use earpieces. So voice became a choice, not the default—manual reporting still lives alongside it.

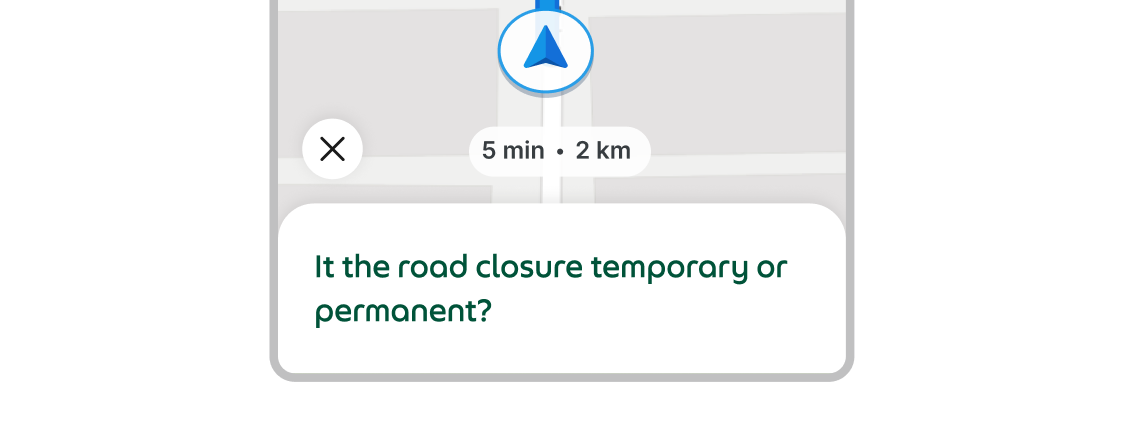

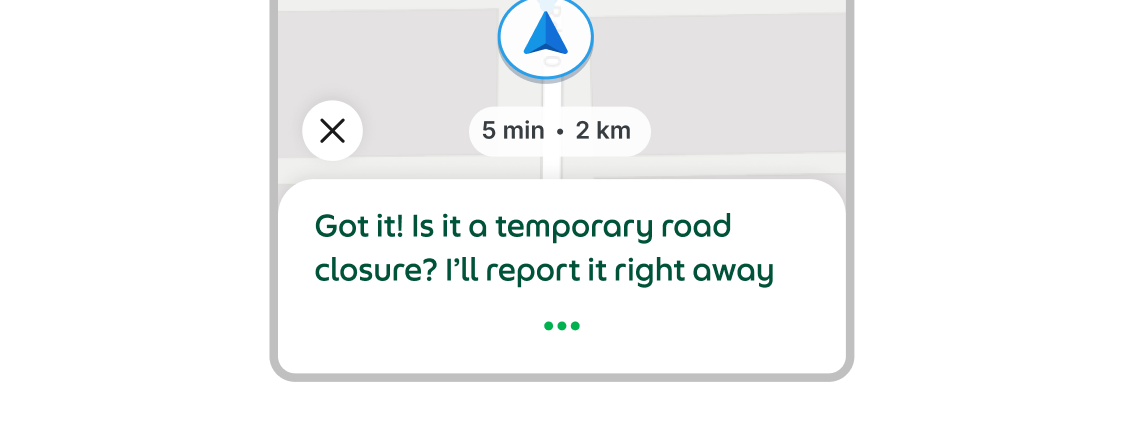

- — Richer data = more driver attention. Each follow-up question costs cognitive load. We kept it to the smallest useful set—closer to radio chatter than a call centre script.

- — Reports flow through validation before changing a route. A single voice report isn't enough to reroute thousands of people—driver votes, image analysis, and passability checks all run first. Running this pipeline for millions of drivers adds up, so we modelled per-report costs with the data team and explored volume-based options. Designing the feedback so drivers know their report "did something"—without exposing that complexity—was its own design challenge.

Tap once. Talk. Done.

The shipped version replaced every tap with a single voice utterance. But the same pipeline that let drivers report incidents could power almost any action that previously required them to stop and look at their phone. We opened the architecture to other teams. What started as a reporting feature became a foundation.

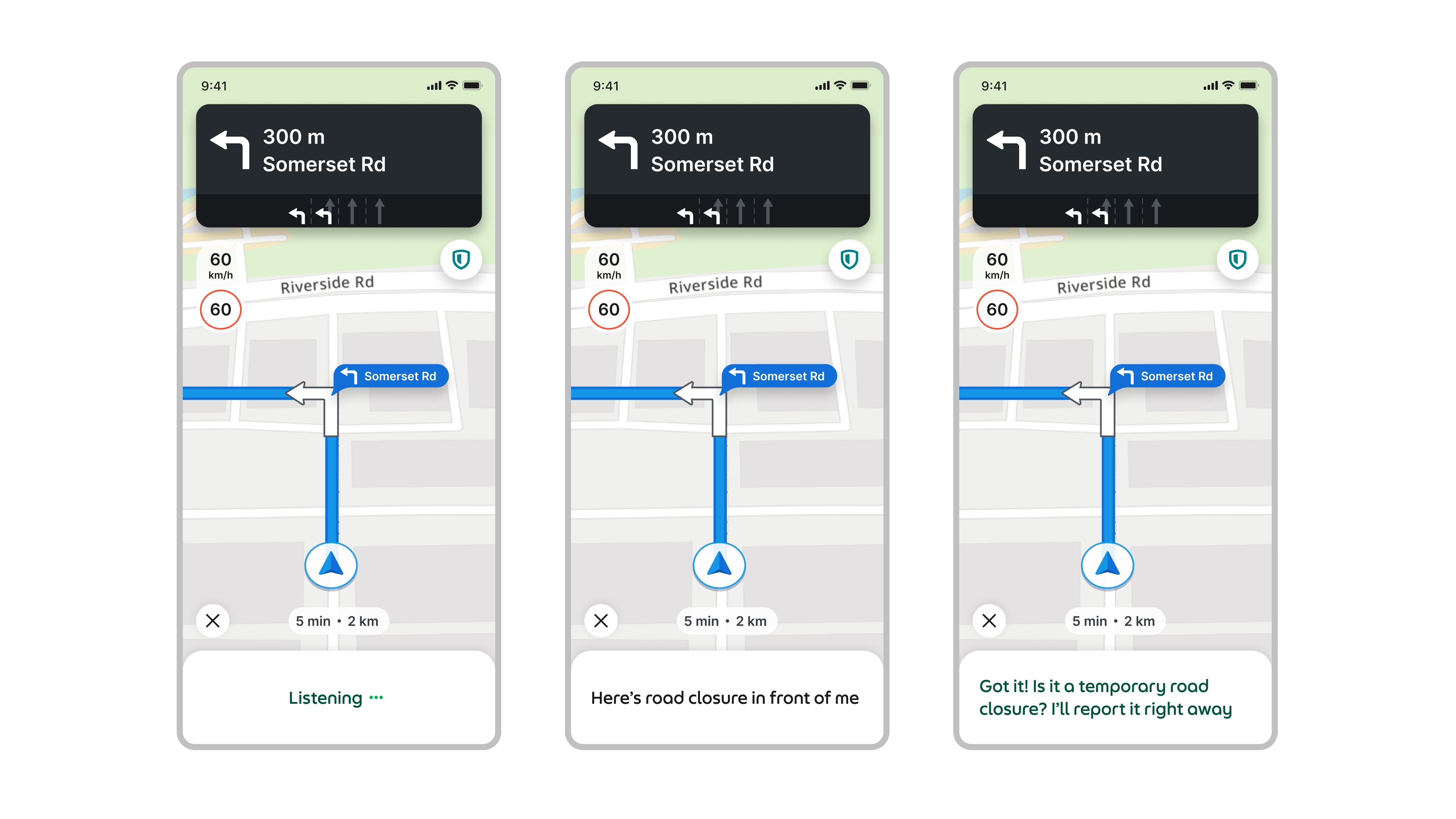

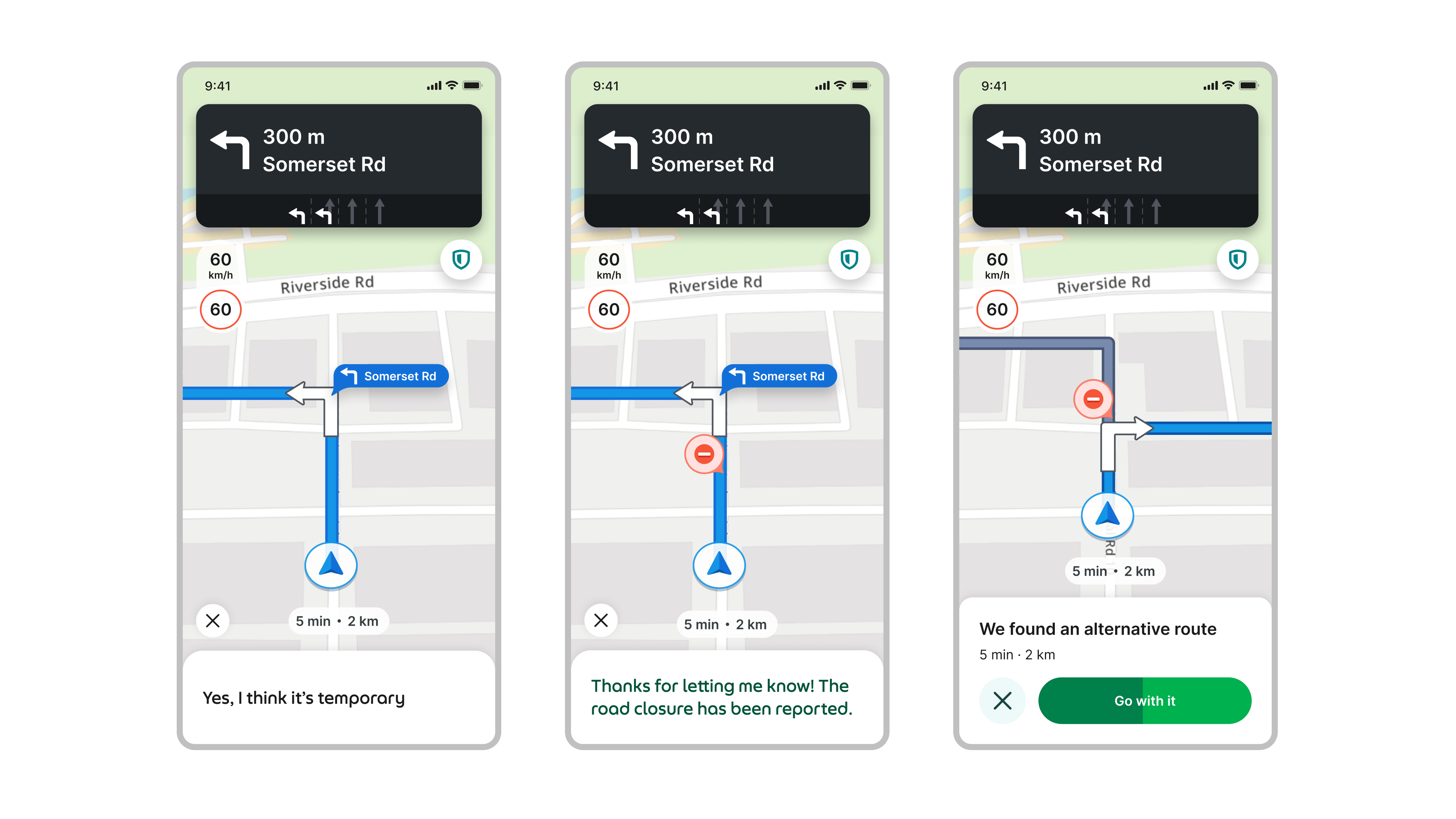

Voice reporting, end-to-end

Tap the microphone, describe what you see. The AI classifies the incident, asks one or two contextual follow-ups, then confirms in a large glanceable sheet. Under 15 seconds. No menus, no category picking.

Query, don't just report

We extended the same channel to let drivers ask questions, not just submit reports. Say "how's the traffic ahead?" and get a spoken summary without leaving the navigation view. Same interface, same utterance—the map talks back.

Reach passengers without pulling over

Drivers often need to contact passengers mid-trip—a late arrival, a pickup change, a quick question. Working with the Communications team, we wired the voice pipeline into messaging and calls. Say "text passenger I'll be 5 minutes late" or "call passenger." The passenger gets a normal message or call. The driver never touches the screen.

Find parking before you arrive

With the Maps team, we extended voice to parking. Ask for nearby spots on the way to drop-off and get a ranked list—nearest and cheapest—navigated automatically.

We also rethought the incident icons

Icons that looked clever in Figma were consistently mis-identified on the road. We ran card-sorting sessions with drivers, then rebuilt the set from familiar road-sign patterns. Sounds obvious. Wasn't obvious until we watched it fail.

Numbers that (honestly) surprised us.

In pilots, the majority of invited drivers actively used the feature—and nearly half completed at least one follow-up question. Strong signal that drivers tolerate short, meaningful back-and-forths when they feel useful.

Flood reporting noise in Singapore dropped dramatically when voice reports were combined with external sources—meaning ops teams could finally trust the signal instead of manually filtering it.

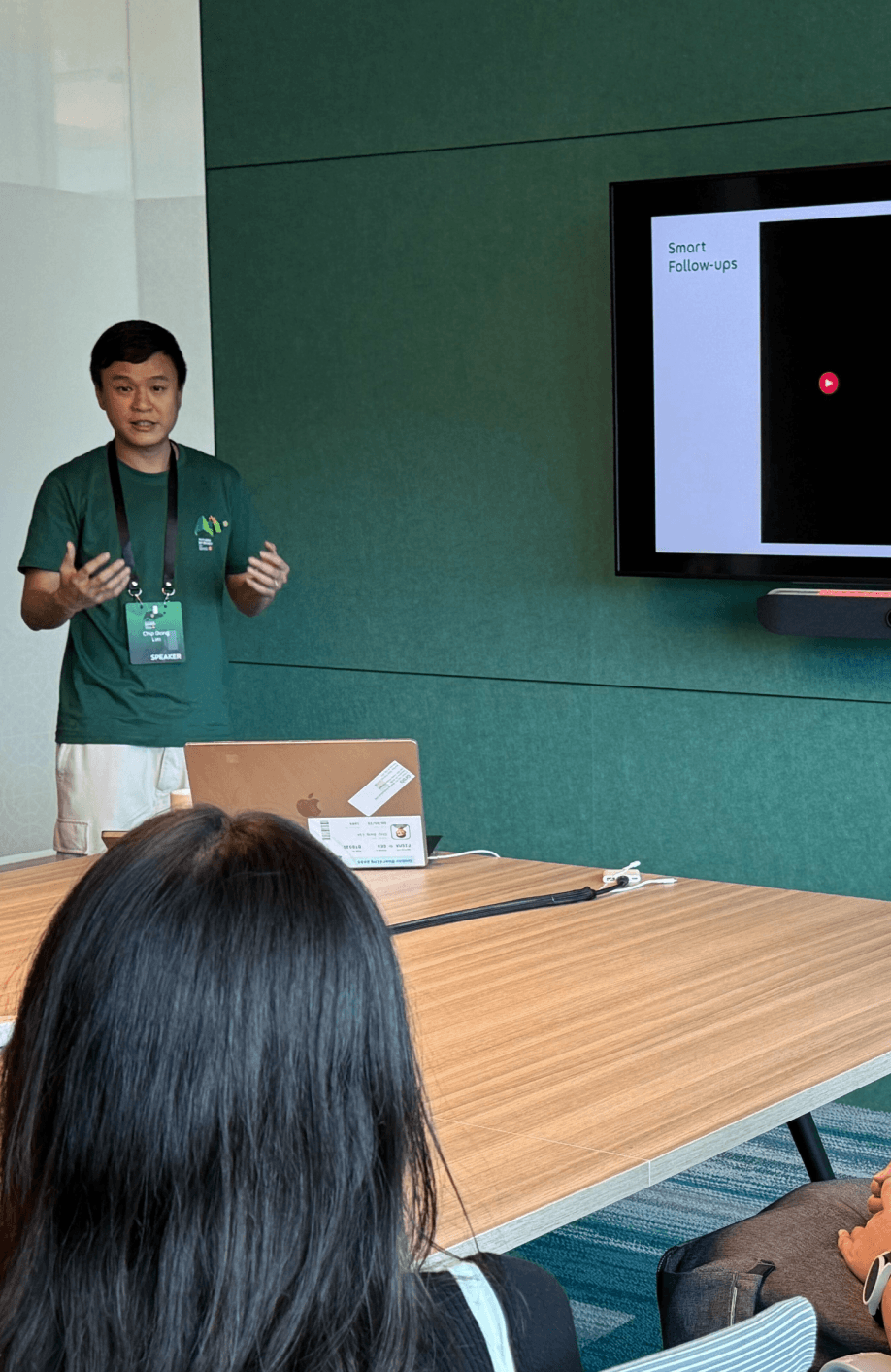

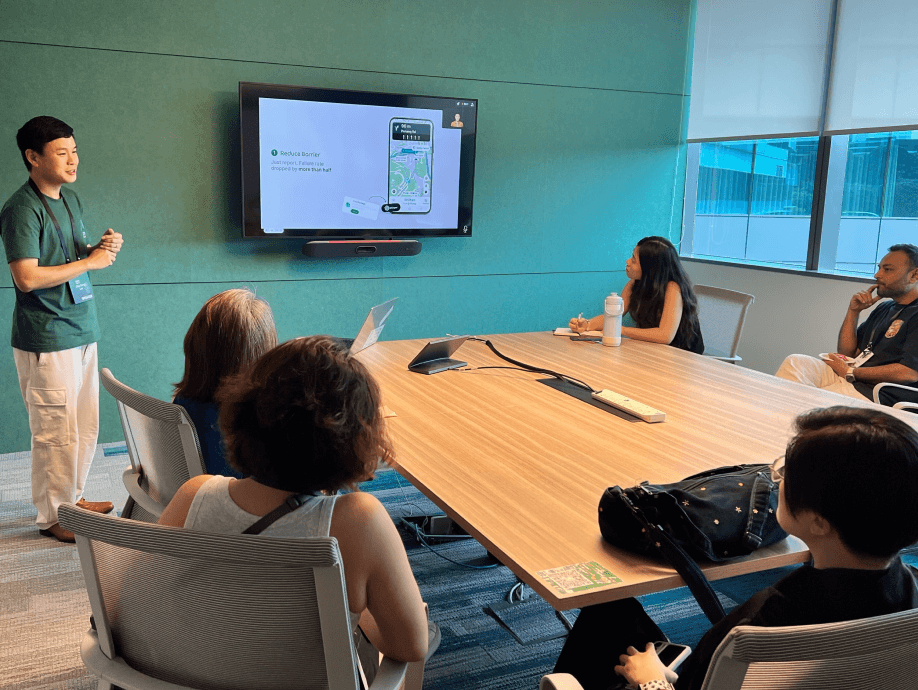

Taken outside.

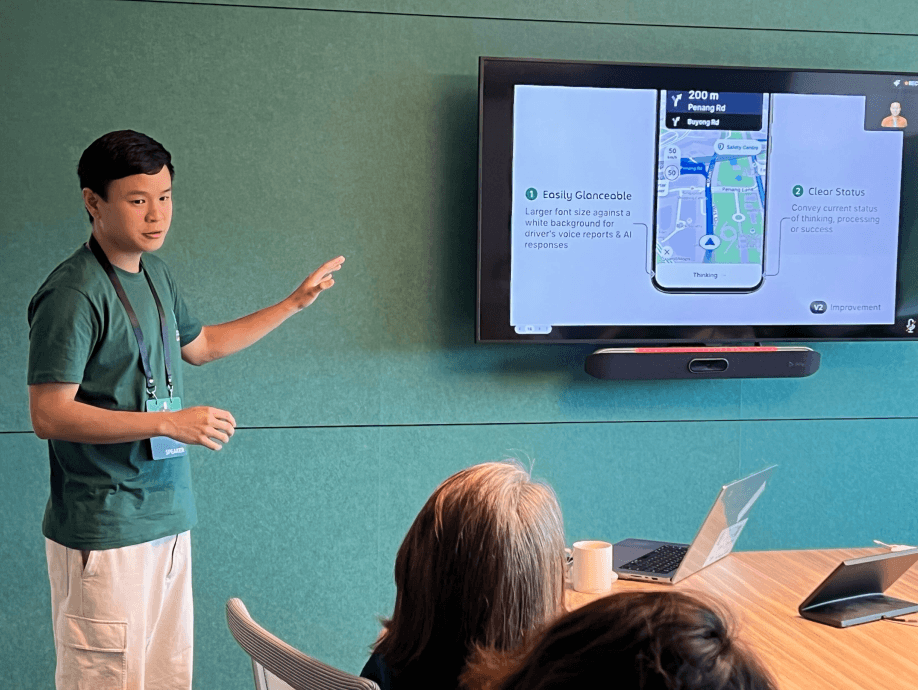

The work found its way onto a public stage—a lightning talk at SG60: Future by Design with Grab during Singapore Design Week 2025, held at Grab HQ. "Can Your Voice Fix Traffic?" in front of Singapore's design community.

What I'd tell myself at the start.

Voice is a context, not a feature

Drivers don't want "a voice assistant." They want to be safe and efficient. Sometimes that's voice. Sometimes that's quiet text. Sometimes that's doing nothing because a passenger is watching.

Closing the loop matters more than clever AI

Enabling voice reporting also opened a channel for map errors, payment issues, and policy confusion. The real win wasn't smarter classification—it was making sure those signals reached the right team.

Defend cognitive load like it's personal

Whenever someone wanted "just one more question," the mental image I'd reach for: a driver in Jakarta rain at 11pm, passenger in the back, trying not to crash. If it doesn't work for that person, it probably doesn't deserve to ship.

"You could have been anywhere on the internet. Thanks for reading."

↑ BACK TO TOP